Researchers at the Massachusetts Institute of Technology have launched an eight week trial to test how well preschool children can learn language skills from robots.

Researchers at the Massachusetts Institute of Technology have launched an eight week trial to test how well preschool children can learn language skills from robots.

The experiment is monitoring rates at which children learn and remember new vocabulary from a selection of storytelling robots at a local Boston kindergarten.

Supplemented by a tablet computer to illustrate the stories, the robots – dubbed “DragonBots,” adapt their stories each week, coordinating their pace with the progress of the child. The research team, led by graduate student Jacqueline Kory and Dr Cynthia Brezeal, then logs and tracks the data to determine any patterns.

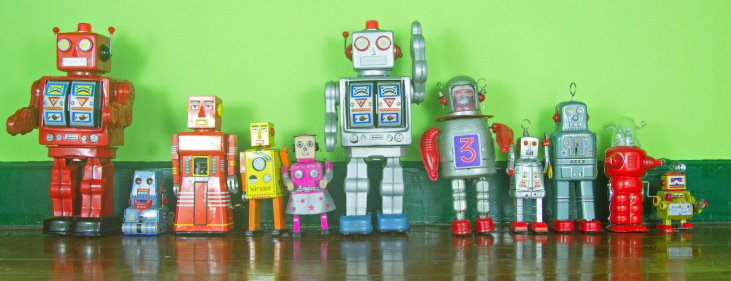

The team believe the robots bring a new dimension to classroom learning; benefiting students with a physical presence beyond other screen-based media, such as cartoons or animated games. The robots in current use have been given individual names and features, and have been programmed to practice specific social skills and eye contact – much like a human teacher.

Brezeal enthuses, “There is a tremendous opportunity to develop new technology to support children’s education, especially early childhood learning.”

This experiment is one of several studies on social interaction already coordinated by the team this year; and results have illustrated that the robots’ human-like social skills improve rates at which children process information and engage in the classroom.

Work is still in the early stages of development and is somewhat hindered by a lack of voice-recognition software for young children.

However, the team hopes that with upgrades to the “bots hardware and expanded data fields,” they can move beyond controlled testing environments and revolutionize language education for the next generation.